An ETL pipelines extracts data from different sources, transforms it to a usable format, and loads it into a target destination like a data warehouse for analysis. This process helps create a single, unified view of a company’s data.

Whether you’re migrating to the cloud, building a data warehouse, or integrating real-time analytics, understanding ETL pipelines is crucial for long-term digital success.

1. What Is an ETL Pipeline?

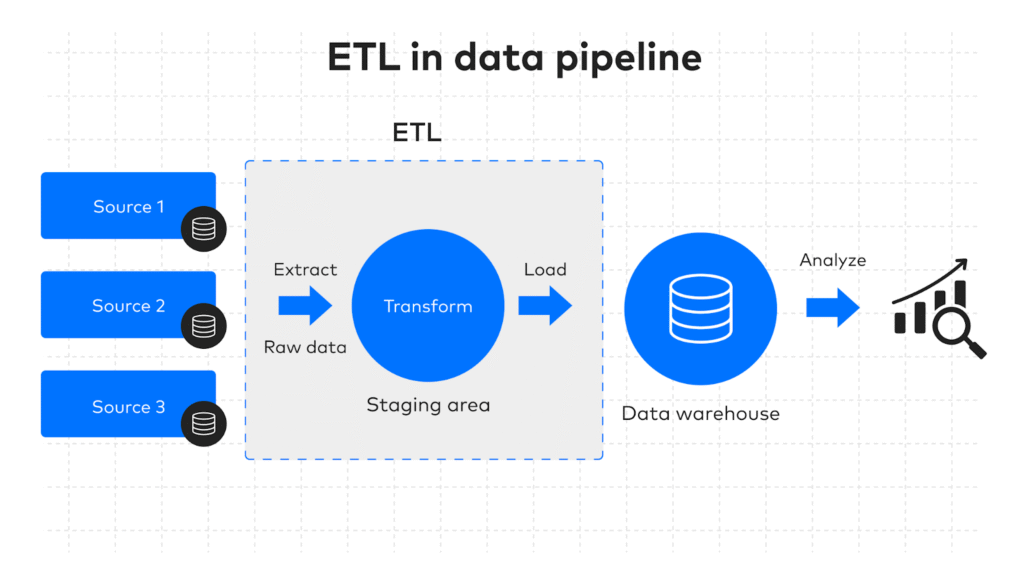

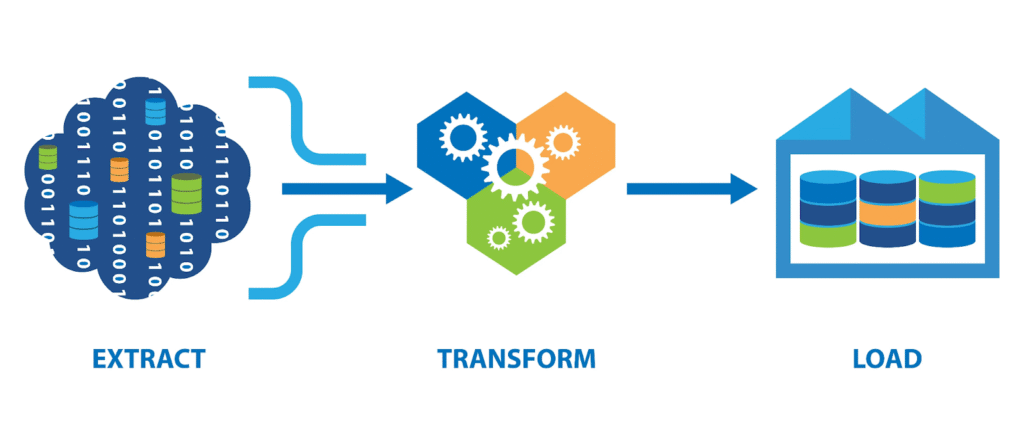

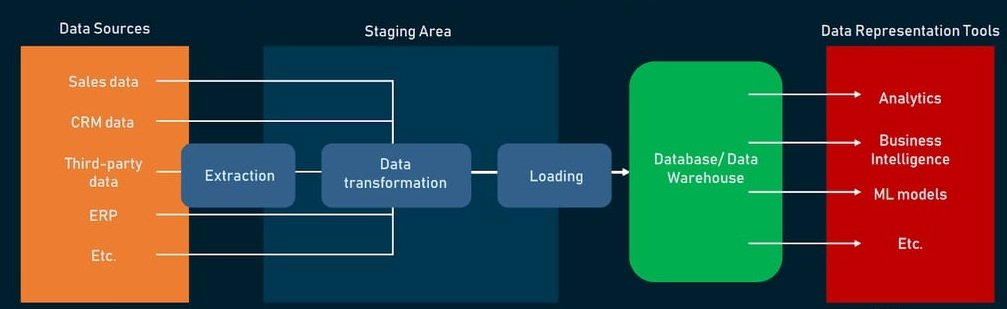

ETL pipelines are the backbone of modern data integration, enabling organizations to efficiently manage and synchronize information between different systems. The term “ETL” stands for Extract, Transform, Load — three core steps that ensure raw data from multiple sources is cleaned, formatted, and delivered to a target destination such as a cloud database, data warehouse, or analytics platform.

In simple terms, an ETL pipeline acts as a bridge between your data sources and your decision-making tools. It doesn’t just move data — it ensures that the data is accurate, consistent, and ready for business insights.

Key Components of ETL Pipelines

- Extract – Pulls data from diverse sources like SQL databases, APIs, ERP systems, or flat files.

- Transform – Cleans, enriches, and formats the data for analysis (e.g., removing duplicates, converting data types, or merging datasets).

- Load – Stores the transformed data into its final location, such as Google BigQuery, Amazon Redshift, or Azure Data Lake.

Example Use Cases

- E-commerce Data Migration: Moving product and sales data from MySQL to Azure Data Lake for scalable analytics.

- CRM Data Cleanup: Transforming and standardizing customer records before loading them into Power BI dashboards.

- ERP System Integration: Syncing financial and operational data into a central cloud-based dashboard for real-time reporting.

By automating these steps, ETL pipelines save hours of manual work, reduce human errors, and ensure businesses always work with reliable, up-to-date information. This makes them essential for cloud data sync, reporting, and business intelligence.

2. Why ETL Pipelines Are Critical for Cloud Data Sync

In today’s data-driven business environment, cloud systems rely on accurate, up-to-date information to deliver value. ETL pipelines play a crucial role in ensuring that this data sync process is smooth, efficient, and error-free. Without a well-structured ETL framework, syncing data between platforms can quickly become messy, resulting in inconsistencies, duplicates, or outdated information.

By automating the Extract, Transform, Load process, ETL pipelines ensure that only clean, validated, and structured data flows into your cloud systems. This not only improves the reliability of your data but also empowers your teams to make faster, data-backed decisions.

Key Benefits of ETL Pipelines for Cloud Data Sync

- Automates repetitive data flows – Eliminates the need for manual data entry and repetitive updates.

- Reduces human error – Ensures accuracy by applying consistent transformation rules and validations.

- Enables real-time business insights – Provides decision-makers with timely, high-quality data for analytics and reporting.

- Improves cross-platform compatibility – Converts data into formats that can be easily used across multiple cloud applications.

For businesses operating in multi-cloud or hybrid environments, ETL pipelines act as the glue that keeps all systems in sync. By maintaining a consistent flow of accurate information, they power everything from real-time dashboards to AI-driven analytics.

3. The ETL Process in Action

To understand the true value of ETL pipelines, it’s important to see how they work in real-world scenarios. The ETL process — Extract, Transform, Load — forms the backbone of modern cloud data integration, ensuring that businesses can move from raw, unorganized information to meaningful, analytics-ready data.

1. Extract

In this phase, ETL pipelines pull data from multiple sources such as ERP systems, CRM platforms, databases, or third-party APIs. This step ensures that all relevant information — whether transactional, operational, or customer-related — is collected in one place.

2. Transform

Once extracted, the data undergoes cleaning, enrichment, and formatting. This transformation stage removes duplicates, corrects inconsistencies, and applies business rules to structure the data. Well-implemented ETL pipelines guarantee that the transformed data is accurate, standardized, and ready for analysis.

3. Load

Finally, the processed data is loaded into the target system — such as a cloud data warehouse, Azure Data Lake, AWS Redshift, or analytics tools like Power BI. This allows decision-makers to run reports, track KPIs, and uncover business insights with ease.

Real-World Example

Imagine an e-commerce company extracting order details from its ERP, transforming the data to align with reporting standards, and loading it into a Power BI dashboard. The entire process, powered by ETL pipelines, happens automatically and in near real-time — enabling quick, informed decision-making.

Pro Tip: Choosing the right cloud platform can enhance your ETL performance and cost efficiency. If you’re debating between Azure and AWS, assess factors like scalability, integration options, and pricing before committing.

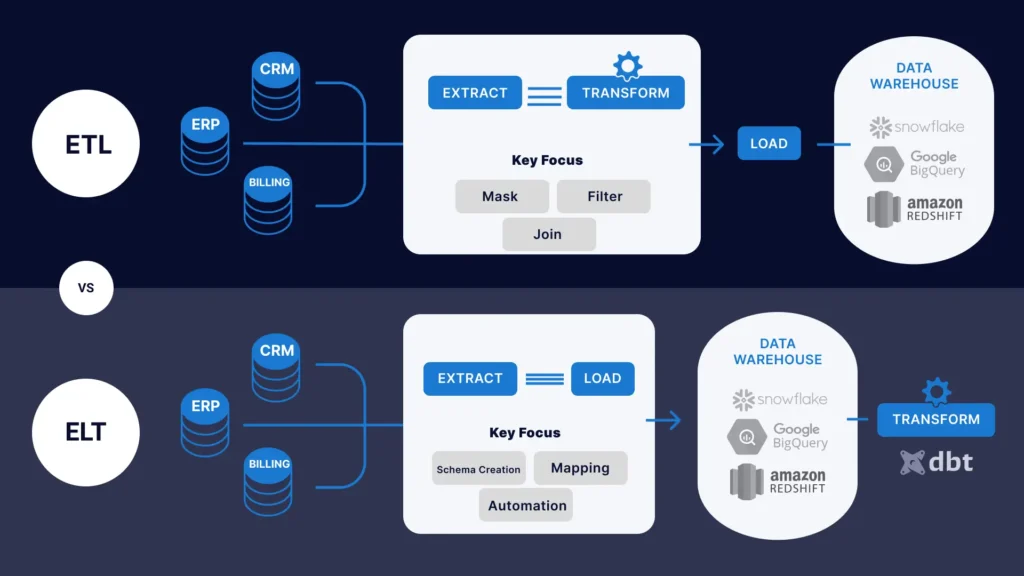

4. ETL Pipelines vs. ELT Pipelines

When discussing cloud data integration, one common debate is ETL pipelines versus ELT pipelines. Both approaches handle data extraction, transformation, and loading — but the sequence and execution differ, leading to variations in performance, scalability, and use cases.

ETL pipelines follow the traditional method:

- Extract data from multiple sources.

- Transform it into a clean, structured format.

- Load it into the destination (data warehouse, analytics tool, or cloud storage).

In contrast, ELT pipelines reverse the last two steps:

- Extract and Load the raw data into the target system first.

- Then Transform it inside the target environment using its processing power.

This difference impacts speed, cost, and flexibility — making the choice between ETL and ELT dependent on business needs, data volume, and technology stack.

Comparison: ETL Pipelines vs. ELT Pipelines

| Feature | ETL Pipelines | ELT Pipelines |

|---|---|---|

| Data Processing Order | Extract → Transform → Load | Extract → Load → Transform |

| Best For | Complex transformations before storage | Large volumes of raw data with flexible transformations |

| Processing Location | External ETL engine or middleware | Target data warehouse or cloud platform |

| Speed | May be slower for huge datasets due to pre-loading transformations | Faster initial load, but transformation speed depends on target system |

| Data Storage Needs | Stores only transformed, clean data | Stores both raw and transformed data |

| Ideal Use Cases | Legacy systems, strict compliance needs, curated analytics | Cloud-native systems, big data analytics, machine learning workflows |

| Common Tools | Talend, Informatica, Pentaho | Snowflake, BigQuery, AWS Redshift |

5. Real-Time Cloud Integration with ETL Pipelines

In today’s fast-paced digital world, businesses can’t afford to wait hours or days for updated data. Real-time cloud integration with ETL pipelines ensures that information flows instantly between systems, enabling immediate insights and actions.

Traditional ETL pipelines often work in scheduled batches, but real-time ETL uses streaming technologies to process data as it’s generated. This is crucial for scenarios like:

- Live dashboards that update instantly.

- Fraud detection systems that act within seconds.

- IoT applications that require constant monitoring.

By leveraging tools like Azure Data Factory, AWS Glue, Apache NiFi, and Apache Kafka, businesses can extract, transform, and load data without delays — directly into analytics platforms like Power BI Streaming Datasets.

Real-time ETL pipelines reduce decision-making latency, improve customer experiences, and allow companies to respond proactively to emerging opportunities or threats. Whether it’s e-commerce order tracking, financial market monitoring, or sensor data analysis, real-time integration is becoming the gold standard for modern data-driven organizations.

6. Scalability and Security of ETL in the Cloud

As data volumes grow, businesses need solutions that can scale effortlessly without compromising performance. Cloud-based ETL pipelines are designed for this very challenge, offering elastic scalability to handle everything from gigabytes to terabytes — or even petabytes — of data.

With modern cloud-native ETL tools, scalability is not just about processing more data; it’s about doing so efficiently and cost-effectively. Platforms like AWS Glue, Azure Data Factory, and Google Cloud Dataflow automatically adjust computing resources to meet demand, ensuring smooth data flows even during peak loads.

Security is equally critical. Robust ETL pipelines incorporate:

- Role-Based Access Control (RBAC) to manage user permissions.

- Data encryption at rest and in transit for maximum protection.

- Compliance with regulations like GDPR, HIPAA, and SOC 2.

- Audit logs to track changes and ensure accountability.

By combining scalability and enterprise-grade security, cloud ETL pipelines enable businesses to grow confidently while safeguarding sensitive information. This makes them an ideal choice for organizations that demand both performance and protection in their data operations.

7. ETL for Business Dashboards & Data Visualization

In the era of data-driven decision-making, accurate and timely insights are essential. ETL pipelines play a vital role in powering business dashboards and data visualization tools like Power BI, Tableau, and Looker.

By extracting data from multiple sources, transforming it into a clean, consistent format, and loading it into analytics platforms, ETL pipelines ensure your dashboards display only reliable and relevant information. This means decision-makers can trust the numbers they see—whether tracking KPIs, monitoring sales trends, or analyzing customer behavior.

A well-designed ETL pipeline also supports real-time or near real-time updates, making dashboards more responsive and enabling businesses to act quickly on emerging opportunities or risks. Clean data leads to:

- Faster dashboard performance with optimized queries.

- Improved accuracy by eliminating duplicates and inconsistencies.

- Better storytelling through high-quality visualizations.

At Power Soft, we specialize in building custom dashboards powered by advanced ETL pipelines, ensuring you get insights that are not just informative but actionable.

8. Best Practices for Building ETL Pipelines

Designing strong ETL pipelines ensures smooth and reliable data integration. Poorly built pipelines can cause data loss and errors, affecting decisions. Follow these best practices for optimal results:

- Modular Design for Flexibility

Split ETL pipelines into reusable parts like extraction, transformation, and loading to simplify maintenance and updates. - Logging & Monitoring for Transparency

Use logging and monitoring tools like Apache Airflow or AWS CloudWatch to catch issues early and track data flow. - Data Validation at Every Stage

Implement automated checks during all ETL steps to maintain clean, accurate data. - Failover Handling for Reliability

Add failover and auto-restart features to quickly recover from any failures. - Scalability in Mind

Use cloud-based tools with elastic scaling to handle growing data volumes without slowing down.

How Power Soft Helps with ETL Pipeline Development

At Power Soft, we offer full-stack data pipeline development tailored to your needs. From custom ETL workflows to cloud-based integration, we help companies of all sizes transform how they use data.

✅ Our Services:

- Cloud Integration (Azure & AWS)

- Data Analytics (Power BI, Tableau)

- Business Dashboards

- ERP & CRM Integration

- Custom Web App Development

📞 Need help building an ETL pipeline? Visit https://powersoft.agency

✅ Conclusion

ETL pipelines are essential for turning scattered data into organized, actionable insights. From cloud sync to analytics and beyond, building an effective ETL strategy is a must for modern data-driven organizations. At Power Soft, we provide scalable, secure, and efficient ETL solutions that align with your business goals.

Power Soft helps you choose the ideal cloud platform and scale your business with confidence, backed by expert guidance and tailored cloud solutions.

📧 Email: contact@powersoft.agency

🌐 Website: www.powersoft.agency/services

📞 Call & WhatsApp 1: +1 561-556-0226

📞 Call & WhatsApp 2: +88 01966 773464